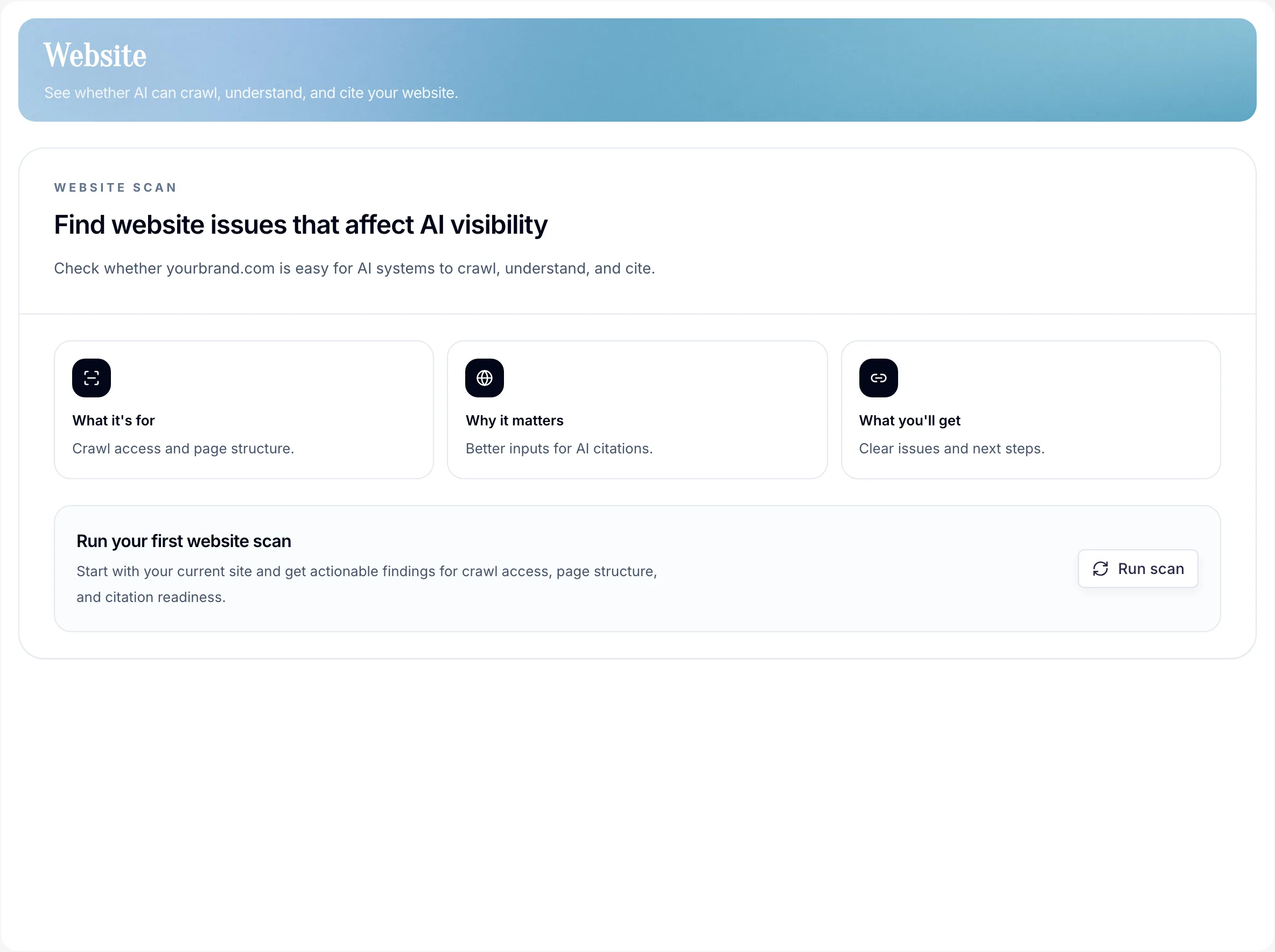

What the Website page checks

Whether your site is reachable, parse-able, and cite-able for AI systems. Think of it as an evidence-first crawlability check for AI search before you judge Monitoring or Sources.

What it tests

- Domain reachable

- Pages discovered

robots.txt,sitemap.xml, andllms.txtstatus- Metadata and machine-readable signals

- Pass/fail checks per page

- Prompt-aware coverage evidence where monitored prompts and owned pages line up

When to run a scan

- After any meaningful site change — redesign, migration, CMS update.

- When monitoring results look weak or inconsistent.

- Before assuming a visibility problem is a content problem.

How reruns work

Website audits are deterministic: a rerun records what PromptScout can fetch and evaluate at that moment. It does not guess why a model cited or ignored a page.

- Free teams keep the onboarding bootstrap audit as their Website state.

- Paid teams can run dashboard full-site audits under the current daily limit.

- Focused page audits have their own per-page daily limit and do not replace the latest full-site state.

- Older runs stay available as evidence, so fixes can be compared against later scans.

How to use the results

- Fix foundational blockers first — reachability, wrong domain, missing files.

- Then check whether crawlable pages are also cited pages in Sources.

- Re-run Website after fixes, then re-run Monitoring to see if visibility moves.

This sequence stops you from optimizing pages AI still can't reach and helps the rest of your workflow stay grounded in real site conditions.

Keep these files healthy

robots.txt— don't accidentally block AI crawlers.sitemap.xml— fresh and complete.llms.txt— describe the pages you want AI systems to prioritize.

Next steps

- Check citation coverage in Sources.

- Re-run Monitoring after fixes.

- Use Content when Website gaps and Insights point at the same page opportunity.